‘Liquid’ machine-learning system adapts to changing conditions

MIT researchers have developed a kind of neural community that learns on the job, not simply throughout its coaching part. These versatile algorithms, dubbed “liquid” networks, change their underlying equations to constantly adapt to new knowledge inputs. The advance might help resolution making primarily based on knowledge streams that change over time, together with these concerned in medical analysis and autonomous driving.

“This can be a manner ahead for the way forward for robotic management, pure language processing, video processing—any type of time sequence knowledge processing,” says Ramin Hasani, the examine’s lead creator. “The potential is absolutely vital.”

The analysis shall be offered at February’s AAAI Convention on Synthetic Intelligence. Along with Hasani, a postdoc within the MIT Laptop Science and Synthetic Intelligence Laboratory (CSAIL), MIT co-authors embody Daniela Rus, CSAIL director and the Andrew and Erna Viterbi Professor of Electrical Engineering and Laptop Science, and Ph.D. pupil Alexander Amini. Different co-authors embody Mathias Lechner of the Institute of Science and Expertise Austria and Radu Grosu of the Vienna College of Expertise.

Time sequence knowledge are each ubiquitous and very important to our understanding the world, based on Hasani. “The actual world is all about sequences. Even our notion—you are not perceiving pictures, you are perceiving sequences of pictures,” he says. “So, time sequence knowledge truly create our actuality.”

He factors to video processing, monetary knowledge, and medical diagnostic purposes as examples of time sequence which might be central to society. The vicissitudes of those ever-changing knowledge streams might be unpredictable. But analyzing these knowledge in actual time, and utilizing them to anticipate future habits, can enhance the event of rising applied sciences like self-driving vehicles. So Hasani constructed an algorithm match for the duty.

Hasani designed a neural community that may adapt to the variability of real-world methods. Neural networks are algorithms that acknowledge patterns by analyzing a set of “coaching” examples. They’re usually stated to imitate the processing pathways of the mind—Hasani drew inspiration straight from the microscopic nematode, C. elegans. “It solely has 302 neurons in its nervous system,” he says, “but it might generate unexpectedly complicated dynamics.”

Hasani coded his neural community with cautious consideration to how C. elegans neurons activate and talk with one another through electrical impulses. Within the equations he used to construction his neural community, he allowed the parameters to alter over time primarily based on the outcomes of a nested set of differential equations.

This flexibility is essential. Most neural networks’ habits is mounted after the coaching part, which suggests they’re dangerous at adjusting to modifications within the incoming knowledge stream. Hasani says the fluidity of his “liquid” community makes it extra resilient to surprising or noisy knowledge, like if heavy rain obscures the view of a digital camera on a self-driving automobile. “So, it is extra sturdy,” he says.

There’s one other benefit of the community’s flexibility, he provides: “It is extra interpretable.”

Hasani says his liquid community skirts the inscrutability widespread to different neural networks. “Simply altering the illustration of a neuron,” which Hasani did with the differential equations, “you may actually discover some levels of complexity you could not discover in any other case.” Because of Hasani’s small variety of extremely expressive neurons, it is simpler to look into the “black field” of the community’s resolution making and diagnose why the community made a sure characterization.

“The mannequin itself is richer when it comes to expressivity,” says Hasani. That would assist engineers perceive and enhance the liquid community’s efficiency.

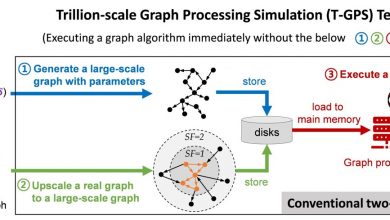

Hasani’s community excelled in a battery of exams. It edged out different state-of-the-art time sequence algorithms by a couple of share factors in precisely predicting future values in datasets, starting from atmospheric chemistry to visitors patterns. “In lots of purposes, we see the efficiency is reliably excessive,” he says. Plus, the community’s small dimension meant it accomplished the exams and not using a steep computing value. “Everybody talks about scaling up their community,” says Hasani. “We wish to scale down, to have fewer however richer nodes.”

Hasani plans to maintain bettering the system and prepared it for industrial software. “We’ve got a provably extra expressive neural community that’s impressed by nature. However that is just the start of the method,” he says. “The apparent query is how do you lengthen this? We predict this type of community might be a key component of future intelligence methods.”

Conclusion: So above is the ‘Liquid’ machine-learning system adapts to changing conditions article. Hopefully with this article you can help you in life, always follow and read our good articles on the website: Ngoinhanho101.com